Your buyer has already moved to the next vendor.

The traditional B2B buyer journey is no longer a series of predictable clicks leading to your conversion funnel; it is now a quest for synthesized answers. Data confirms this shift: 67% of buyers now utilize GenAI as a core component of their purchasing process. More significantly, 25% of them have abandoned traditional search engines entirely, using AI tools as their primary research engine for vendor discovery.

These days, your mandate as a marketing leader has shifted. Success is no longer just measured by how often your brand is found in search results, but by how accurately your brand is represented within the AI space. You are now operating in a “brands are summarized first” environment. With nearly 50% of searches triggering an AI-generated summary, the traditional “blue link” is no longer the first touchpoint for your prospects. This list of organic search results has dominated buyer discovery for nearly three decades, but it is being replaced by a synthesized paragraph that answers your buyer’s questions before they ever click through to your domain. If their first impression of your brand is a summary generated by an AI model rather than your own homepage, the architectural integrity of your content becomes your only line of defense.

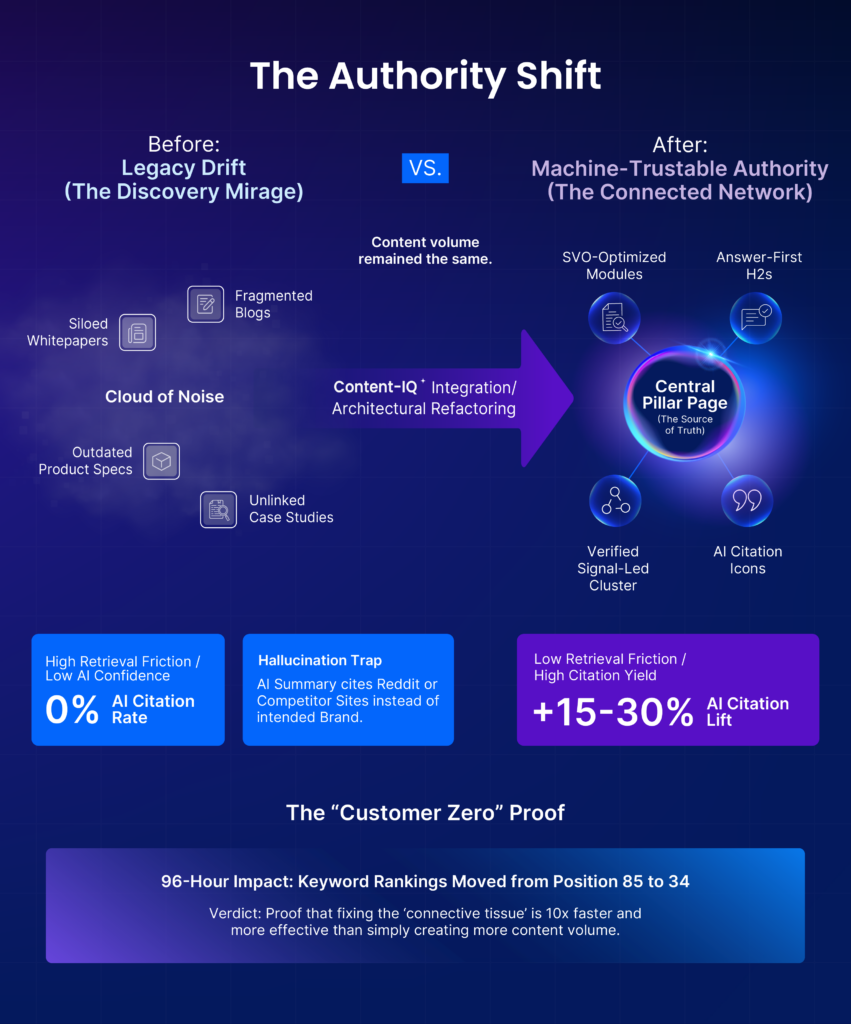

When your content architecture is fragmented, AI systems do not simply report a lack of information. Instead, you fall into the hallucination trap. Because AI systems prioritize providing a complete answer, they will fill knowledge vacuums with external competitor or third-party data or outdated archives to complete their summary of your brand. In this new landscape, market irrelevance is the immediate consequence of clinging to legacy “visibility” strategies.

Why AI Systems Can’t “Read” You

For years, you may have treated technical SEO as a backend maintenance task. In the age of AI, your technical friction, specifically RAG (Retrieval-Augmented Generation) failure, has evolved into a significant brand risk.

RAG systems do not care about carefully crafted brand story. They care about finding “liftable chunks” of high-confidence data to answer a buyer’s query. If your content is fragmented or messy, an AI model cannot verify your current value proposition. This technical friction is not just a crawl error. It is a signal failure. When your own domain fails to provide a machine-trustable answer, AI systems are forced to source their definition of your brand from the open web.

Consider the impact on your market representation. Imagine a buyer asks AI to compare your current software capabilities against a rival. If your site architecture is inconsistent, the AI model might blend a three-year-old blog post with a third-party forum thread to define your current features. While you are focused on visibility, the AI system is creating a version of your brand that is fundamentally inaccurate.

This blended definition is a direct cause of machine hallucination. It creates a dangerous AI system-trust gap. AI systems evaluate your content based on how easily it can be lifted and synthesized. If your architecture makes your data difficult to parse, AI loses confidence in you as a primary source of truth. Your own content is effectively confusing the systems your buyers trust most.

To protect your pipeline, you must move from hosting “pages” to architecting machine-trustable data. You must bridge this gap by ensuring your content architecture is consistent, connected, and designed for machine-level accuracy.

How AI Systems Evaluate & Trust Your Content: The Four Signals

To move from content volume to machine-trustable authority, you must master the signals AI systems use to decide whether your content is understood, trusted, and reused. This is not a new SEO framework. These are the building blocks for your content’s architectural integrity. They determine whether an AI system lifts your data with a high-confidence score or ignores you entirely.

Clarity of Meaning: Semantic Precision

AI systems do not “interpret” ambiguity the way humans do. If your content does not clearly define what your product is, who it is for, and how it compares, the model fills in the gaps, often incorrectly. You must define your brand terms so clearly that a machine cannot misinterpret your core value proposition.

The Signal Shift: If you describe your software as an “end-to-end solution,” AI systems might categorize it as anything from a CRM to a logistics tool. If you define it as a “GraphQL-based Headless Commerce Engine,” you provide the precise anchor the model needs to categorize you accurately.

Answer-First Structure: Atomic Chunking

AI systems favor content that delivers clear, concise answers early. If your key ideas are buried or require interpretation, they are less likely to be extracted and reused. This process of atomic chunking ensures your information is broken into small, self-contained units that a machine can easily lift.

The Signal Shift: Instead of a long-form whitepaper where the “benefit” is buried on page six, you provide a structured section: “3 Primary Security Benefits for Fintech.” This allows AI systems to grab that “atom” of information and cite it instantly in a summary.

Context and Connectivity: The Knowledge Graph

AI evaluates how ideas connect across your site. Disconnected pages weaken confidence. Structured relationships between topics reinforce authority. If your product specs are not explicitly linked to your case studies, AI models treat them as unrelated facts.

The Signal Shift: If your “Pricing” and “Features” pages are not linked through your data structure, AI systems may tell a buyer you lack transparent pricing. By connecting them, you ensure AI sees the context: pricing is $X because it includes Feature Y.

Specificity and Evidence: Data over Fluff

AI prioritizes content grounded in concrete facts, named entities, and verifiable claims. Vague language is more likely to be ignored or replaced. In the summarized first world, the brand with the most verifiable data wins the citation.

The Signal Shift: A competitor site says, “Our tool is the fastest on the market.” Your site says, “Our tool processes 50,000 transactions per second with 99.9% uptime.” AI systems will choose your data because it is a verifiable signal rather than an unproven claim.

When you provide these specific signals, you move from being a passive subject of AI summaries to an active architect of your digital representation. Without this foundation, the technical friction in your content becomes a direct barrier to your market share. This leads to the critical question for your team: what is the actual price of being “unverifiable” to AI systems your buyers trust most?

The Cost of Inaction: The Invisible Brand Risk

The transition to an AI-first market is not a slow evolution. It is a fundamental shift in how your brand is valued and verified. If you continue to rely on legacy visibility strategies, you face three distinct business risks:

The Retrieval Penalty

AI discovery engines operate on strict computational budgets. If your content architecture remains a “black box” that requires excessive processing to parse, the system will prioritize low-friction competitors who have already optimized for machine readability.

The Scenario: When a buyer asks for a “Fast Fintech Solution,” AI systems ignore your unoptimized site and sources a competitor whose data is easier to lift. You aren’t just losing a ranking; you are being filtered out of the conversation due to technical inefficiency.

The “Demarketing” Effect

When you allow “data silence” on your own domain, you effectively hand your brand narrative to your rivals. AI models will define your category via your competitor’s attributes and your outdated archives.

The Scenario: If your primary signals are weak, AI systems define your pricing and features using a 2021 Reddit thread or a rival’s comparison page. This erodes your unique competitive advantage and positions you as a secondary player in your own market.

The Shortlist Exclusion

With nearly half of B2B research now occurring in AI interfaces before a buyer ever clicks a link, every day spent with a legacy architecture is a day your brand is being ignored during the first portion of the buyer’s journey.

The Scenario: A prospect asks an AI to “Recommend 3 vendors for Enterprise ERP.” Because your content architecture lacks connectivity, the AI cannot verify your latest security certifications and excludes you from the vendor set before you even know the deal exists.

Reversing these risks isn’t a matter of content volume. It’s a matter of architectural integrity.

The Customer Zero Story

To validate that these risks are reversible, DemandScience applied the Content-IQ methodology to its own digital ecosystem, specifically targeting the highly competitive “B2B display advertising” category. Rather than creating more content volume, the team utilized this architectural framework to prioritize 12 strategic articles and refactor their technical connectivity.

The results prove that AI systems are waiting for a signal they can trust:

- Ranking Velocity: Within four days, the average keyword ranking across the cluster improved from position 85 to 34.

- AI Inclusion: Within five days, the initiative achieved direct inclusion in AI overviews for high-traffic terms.

- Efficiency vs. Volume: This shift was 10x more effective than traditional content creation. It proves that structured authority is the primary driver of visibility in a summarized first world.

Every day you delay this shift, you are paying for a pipeline that is being intercepted by your more AI-precise competitors. Meet with our Labs expert team to discuss how to move from “isolated assets” to a structured, machine-trustable content architecture. Learn how Content-IQ helps ensure your brand commands discovery.

Establishing a High-Yield Governance Loop

To protect your brand in an AI-first market, you must move from a “one-and-done” project mindset to a continuous system that prevents legacy drift. Brand representation is no longer static; AI models are constantly re-synthesizing the web based on the most recent, high-confidence data they can retrieve. If your signals are not persistent and adaptive, your authority erodes.

The Intelligence Layer: Monitoring the Question

High-yield architecture must be fueled by verified buyer signals. Identify the real-time questions your ICP is asking and map them directly to your content’s semantic precision.

The Influence: If you aren’t monitoring the evolving questions, you cannot architect the answers. This layer ensures that as buyer intent shifts, AI models continue to select your data as the primary source for their answers, directly influencing the prospect’s early-stage research.

The Orchestration Layer: Dynamic Brand Defense

A future-proof site requires a modular framework that allows you to swap proof points or machine-trustable descriptions as market trends evolve.

The Influence: By maintaining a low-friction architecture, you ensure your newest features and certifications are immediately liftable by AI. This prevents the retrieval penalty and ensures your brand is synthesized into the buyer’s shortlist accurately, rather than being filtered out due to technical friction.

The Feedback Loop: Measuring Citation Yield

Success is measured by citation yield, a dedicated metric that tracks how often and how accurately AI discovery engines retrieve and summarize your core brand pillars versus citing external, unverified sources.

The Measurement in Practice: Audit AI-generated summaries for high-intent industry queries. Quantify how many brand mentions are sourced from your domain versus third-party archives, providing a direct authority score for your content architecture.

The Pipeline Impact: A higher citation yield correlates directly to shortlist inclusion. When AI systems trust your brand enough to cite you, the buyer trusts AI enough to evaluate you.

Conclusion: Command Your Discovery or Be Erased By It

The era of “content for the sake of volume” has ended. In an environment where you are summarized first, the lack of a machine-trustable content architecture is no longer a technical gap, it is a surrender of your brand identity.

By clinging to legacy visibility strategies, you are essentially providing the “data silence” that forces AI systems to look elsewhere. You are allowing the systems your buyers trust most to define your value proposition through the lens of your most technically precise rivals. Today, the brands that win aren’t the ones with the most pages; they are the ones with the most verifiable integrity.

The shift is binary: You can either remain a passive subject of AI hallucinations, or you can become the active architect of your own representation and the discovery that leads to your next deal.

Stop Leaving Your AI Representation to Chance.

In a world where 47% of research starts in AI tools, “good enough” content architecture is a billion-dollar blind spot. Most B2B brands are currently invisible to the systems their buyers trust most, or worse, they are being misrepresented by them.

Request your Market Intelligence Report + Strategy Consultation.

Identify the surging topics and high-value accounts in your category. See exactly where your current content is failing to signal the machine and where competitors are filling the vacuum.