Clarity Beats Cleverness: How To Get Found When Algorithms Decide What’s Seen

February 26, 2026

In our last webinar on Content Discovery in the Age of AI, one idea came through loud and clear: discovery is no longer primarily human-led. More often, machines are your first audience. They scan, interpret, summarize, and decide what gets surfaced before a buyer ever clicks.

That shift redefines what “good content” means. Ranking is no longer the finish line. If AI systems misinterpret you, they will represent you incorrectly at scale. And that becomes a trust problem.

Or, as we put it in the session, “The question isn’t just can buyers find us, it’s when AI explains us, does it get us right?”

Content Discovery Shifted: Machines Are the First Audience

For years, teams optimized for traditional search behaviors. You wrote for people, tuned for algorithms, and measured performance through clicks, rankings, and sessions. That model still matters, but it is no longer the full picture.

Today, AI systems increasingly sit between your content and your buyer. They do not simply retrieve results. They interpret meaning. They extract “answers.” They condense your positioning into a few lines. They decide what to cite, what to omit, and how to frame what your company does.

That is why content discovery has become less about winning a single search result and more about consistently earning accurate representation across many systems. Buyers may never see your page first. They may see a summary of your page, a citation from a third-party listicle, or a stitched-together recommendation across sources.

In practice, that means you are optimizing for two audiences at once:

- AI systems that need clarity, structure, and context to interpret your content correctly

- Human buyers who still need persuasion, confidence, and proof once they land on your site

You cannot sacrifice either. But you must design for the reality that AI now goes first. If you do not, you are leaving interpretation up to the model.

The New Risk: Content Misinterpretation (Accuracy and Control)

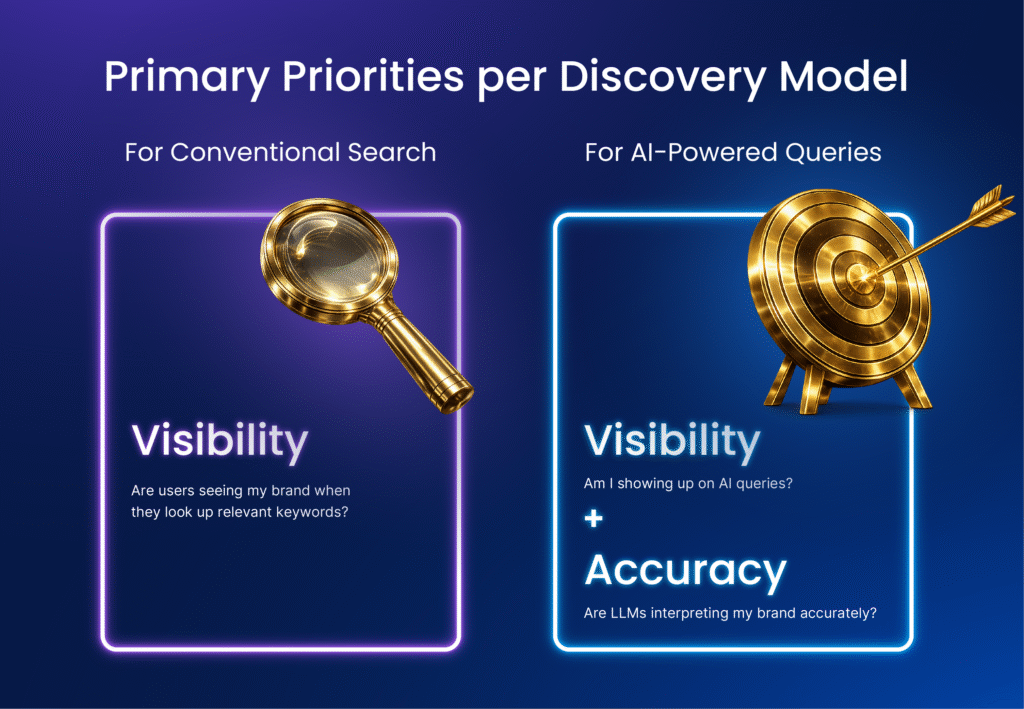

When AI becomes the first interpreter, your primary risk shifts. Visibility is still a concern, but accuracy becomes equally urgent.

In the webinar poll, “losing visibility” and “not being sure how AI evaluates content” were nearly neck-and-neck. That is telling. Many teams are not just worried about being absent. They are worried about being misrepresented. Present but summarized inaccurately. Present but compared incorrectly. Present but framed out of context.

AI summaries can unintentionally distort your message when:

- Your positioning is buried under brand language or abstract taglines

- Key claims are implied instead of stated directly

- Comparisons rely on visuals, tables, or designs that do not translate well to machine interpretation

- Your site contains older pages with outdated statements that conflict with current messaging

This is why one of the most important reframes from the session was that AI search can be both a growth lever and a brand protection strategy. Visibility earns you consideration. Accuracy earns you trust.

As our webinar speaker put it, “We’re not optimizing for traffic. We’re optimizing for more quality of traffic.” In other words, the objective is not volume. It is the right buyer arriving with the right understanding of what you do.

If AI compresses your positioning into something generic, you are no longer competing on differentiation. You are competing on price.

If an AI summary says: “Company X is a B2B lead generation provider.” But your differentiation is precision targeting and revenue accountability; the summary just repositioned you into a commoditized bucket. That’s not just a branding issue. It changes the competitive set the buyer sees.

What AI Prefers: Lists, Comparisons, FAQs, and Definitional Hubs

Many marketers assume AI discovery is just “SEO, but smarter.” The reality is that AI systems often favor content patterns that are simple to parse and cite.

One of the biggest surprises shared in the webinar was how frequently LLMs cite list-based and comparison content. As Megan Cabrera noted, “A lot of what we see come up in citations is listicle and comparison content.” She also called out why it happens: “They look for those very simply formatted pieces of content because that is easy for the LLM to interpret.”

This does not mean your strategy should turn into shallow, high-volume listicles. It means your content must be structured so that a model does not have to infer meaning. If clarity is implied instead of stated, AI will fill the gap for you.

The formats that tend to help include:

- FAQs on product and solution pages that answer real buyer questions directly

- Comparison-style content that makes distinctions explicit in text, not only through design

- Definitional content hubs that organize related concepts under a consistent taxonomy

- Explainers that break complex topics into clear sections with descriptive headings

- Short, precise claims backed by credible proof points, data, and sourcing

The definitional hub concept came up as a concrete example. Sophos maintains a “Cybersecurity Explained” section designed to help both end users and AI systems understand complex concepts and long-tail questions. When you group related content under a shared structure and schema, you reinforce your authority and improve interpretability at scale.

Just as importantly, it reduces fragmentation. AI systems can better “connect the dots” across your content when you connect them first.

Why “Pretty” Can Break Readability: Tables, Infographics, and Text-in-Images

A difficult truth for modern teams is that some of the formats we use to communicate clearly to humans are not reliably interpreted by AI.

The webinar gave a direct example: feature tables. A human can scan a product comparison table and instantly understand what the checks and X’s mean. An AI system may not interpret that layout with the same context, especially if meaning is encoded visually rather than in readable text.

As Megan explained, “Agents cannot read that the same as a human does.”

The same risk applies to infographics where key messaging is baked into the image. If the most important product claims live inside creative files, you are forcing the machine to guess or skip. Many teams are now pushing for a simple rule: keep meaningful text in the page content itself, not only inside imagery.

This is not a call to abandon creativity. The webinar made an important nuance. Creative still matters for the human buyer experience once someone lands on your site. Design influences trust, confidence, and conversion.

The shift is about separating two functions:

- Machine readability: ensure the “core truth” is expressed in clean, structured text

- Human experience: use design to make the page persuasive, intuitive, and differentiated

The best pages do both. They do not choose between readability and beauty. They make sure beauty does not hide the message.

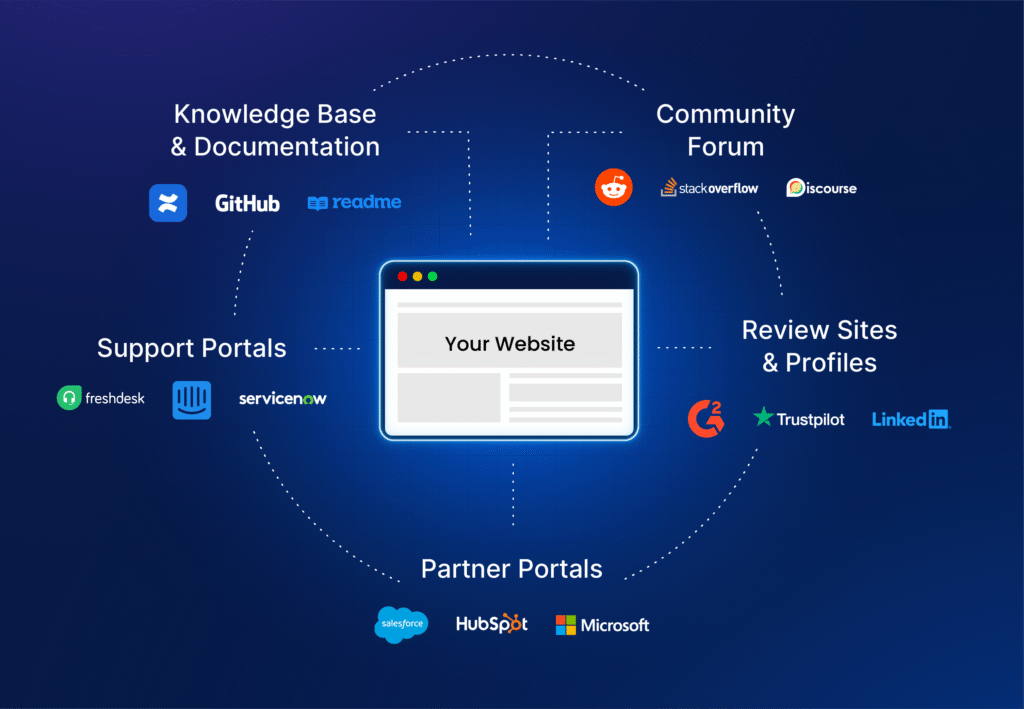

The Ecosystem Effect: Your Website Is Not Your Only Site Anymore

Another key point from the webinar is that AI systems do not evaluate only your marketing site. They evaluate your broader digital footprint.

That includes:

- Knowledge bases and documentation

- Community forums

- Support portals

- Partner portals

- Review sites and third-party profiles

In the session, this was described plainly: it is not just your core website. “It’s your knowledge base, your support site, your community site as well.” Many LLMs will treat those surfaces as a single entity under your brand and use them to triangulate credibility.

This cuts both ways.

If your ecosystem is consistent, it reinforces authority. If it is inconsistent, AI does not resolve the inconsistency. It amplifies it.

This is also why content governance matters more now. It is not enough for marketing to be aligned internally. Your product documentation, support answers, and community responses can all influence what AI systems believe is “true” about you.

For legacy brands, this becomes especially tricky. There is often a long tail of older pages that are still indexed, still crawled, and still cited. As Megan shared, the challenge is not that you lack content. The challenge is that some of it is no longer accurate.

The answer is not to hide your history. The answer is to manage it responsibly through regular updates, deprecation workflows, and consistent formatting across the ecosystem.

Measurement Reality: Canonical Prompts, Multi-Engine Variance, and Baselines

Measurement is where many teams feel the most uncertainty. In the second webinar poll, the top challenge was “credibility, sourcing, and accuracy,” followed by measurement and proof. That order makes sense. If you cannot validate how you are being interpreted and cited, it becomes difficult to prove progress.

The webinar speakers shared a practical approach: establish a baseline, then measure consistently over time.

At Sophos, the team seeded a library of canonical prompts into their AI measurement tool. These prompts act as always-on benchmarks across products and in aggregate. The point is not that they are perfect representations of buyer behavior. The point is that they are stable enough to track month-over-month progress.

This matters because prompt sets can quickly become a moving goalpost. As Megan put it, “If you just keep adding a different prompt library all the time you’re moving the goalpost.”

There is also another reality. Results vary across engines and models. The speakers noted they track across multiple LLMs, and model updates can shift visibility and citations. That multi-engine variance means “one-size-fits-all” optimization is unlikely to hold.

The most defensible KPI stack discussed in the webinar combined:

- Visibility across canonical prompts

- Citation presence and quality

- AI referral traffic and engagement

- Competitive comparisons where relevant

- Content-level improvements that correlate with stronger visibility over time

What teams still want, but often cannot get yet, is better visibility into real prompt behavior. Many organizations are still operating without direct access to the full range of buyer prompts and language patterns.

Until that data becomes more widely available, the most defensible approach is disciplined measurement with a stable baseline, paired with continuous buyer input from sales conversations and customer interactions.

90-Day Action Plan: What to Do Now

If you want impact in the next 90 days, you do not need to reinvent your entire content strategy. You need to tighten what already exists, remove ambiguity, and make your most important pages easy to interpret and cite.

Here is the practical playbook that came through most clearly in the webinar.

Run a targeted content audit

You do not need to review every URL. Start with pages that directly influence pipeline:

- Product pages

- Solution pages

- Category pages

- “How it works” pages

- Key thought leadership or pillar pages that shape positioning

Check when each page was last updated. If it has been more than 12 months, assume it contains stale claims, outdated stats, or missing context.

Refresh for accuracy and proof

Update the details AI and buyers will both rely on:

- Current capabilities and scope

- Updated statistics and evidence

- Recent customer proof points, reviews, and validation

- Clear positioning statements that do not depend on marketing jargon

Add FAQs where buyers need them most

This was called out as a simple, high-leverage change. FAQs make content more extractable and help both humans and AI systems reach accurate understanding faster.

The goal is not keyword stuffing. The goal is direct answers to real buyer questions.

Self-test your brand experience

Do not rely only on tools. Search for the questions buyers actually ask, including practical tasks and “how-to” queries tied to your product.

The webinar shared a strong example: searching for licensing instructions revealed a workflow problem that was pushing buyers into a free trial flow incorrectly, which then distorted conversion reporting. That is not only a discovery issue. It is an operational issue caused by inaccurate content pathways.

Fix readability blockers

Identify where your content depends on visual interpretation:

- Feature tables that require context

- Infographics with text embedded in images

- Layouts that hide key meaning behind design decisions

Preserve the design for humans, but ensure the same meaning exists in readable page text.

Content Strategy Plus Third-Party Presence

A final reality from the webinar is that AI citations often come from outside your site. That means your content strategy cannot stop at your domain.

Your brand presence across third-party sources influences how AI systems describe you, including:

- Reviews and comparison sites

- Communities and forums

- Social platforms

- Reddit and other high-signal discussion spaces

- Industry publications and digital PR placements

This is part of why the webinar framed AI search as a return to strong fundamentals. If AI is drawing from the broader web to decide what is credible, then credibility becomes a distribution strategy, not just a publishing strategy.

The teams that win will not be the ones producing the most content. They will be the ones producing content that is clear, consistent, current, and easy to interpret. They will build a footprint that reinforces the same truth across their ecosystem and the wider market.

Because in the age of AI discovery, clever messaging is not what gets you found. Clear messaging is what gets you cited, trusted, and chosen. DemandScience’s Content-IQ brings together three powerful signals: what buyers search for, what they engage with, and how they behave on your site. It eliminates guesswork and unites visibility, creativity, and personalization so content is not just findable but meaningful.